Thesis proposals

These are our thesis proposals for bachelor and master's degree students. Feel free to send us an email if you're interested.

Serialization-Based Methods and Positional Encoding for 3D Point Cloud Shape Matching

This thesis investigates serialization-based transformer methods for 3D point clouds and the role of positional encoding in shape matching and correspondence. Recent works suggest that competitive performance can be achieved with Transformer-based architectures paired with effective point serialization and positional encodings. The project will (i) reproduce and compare recent approaches, and (ii) design a well-motivated improvement (e.g., a new positional encoding variant or a hybrid serialization strategy) validated through controlled experiments. The study will focus on correspondence robustness under typical challenges: noise, varying point density, partial shapes, and pose changes.

Data

Experiments will use established shape matching datasets, for example: FAUST (human shapes; mesh-to-point cloud variants), and SHREC’19 (human matching / correspondence). Plus, point clouds will be augmented with synthetic degradations (subsampling, noise, partiality) to emulate real acquisition conditions.

Expected Outcomes

- A reproducible benchmark comparing serialization + positional encoding choices for point cloud correspondence.

- Clear ablation results identifying which design choices matter most (accuracy vs. efficiency).

- One validated improvement over a baseline, with code and experimental report.

References (State of the Art)

- Raganato, A., Pasi, G., Melzi, S. (2023). Attention and positional encoding are (almost) all you need for shape matching. Computer Graphics Forum (SGP).

- Wu, X. et al. (2024). Point Transformer V3: Simpler, Faster, Stronger. CVPR.

From Noise to Image: a Study into the Impact of Noise in Image Generation

Modern text-to-image (T2I) pipelines have reached a stage where they can generate near-photorealistic images. Driven by the increase in popularity since 2020, extensive research has focused on improving visual realism. In contrast, the equally critical issue of faithfulness, along with the underlying mechanisms that shape and constrain the generation process, has received comparatively less attention.

Diffusion models work by gradually adding noise to input data through a forward process, transforming it into pure Gaussian noise. A neural network is then trained to reverse this process, starting from random noise and generating new samples that match the original data distribution. This reverse process is what is commonly associated with the generation of images.

Most state-of-the-art models generate samples through a deterministic process: starting from a fixed initial noise, the model follows a specific trajectory to generate a single, corresponding output image. In the context of text-to-image generation, this process is further guided by a textual description, which, alongside the initial noise, directs the model toward creating an image that matches the provided prompt.

Interestingly, the initial noise (or “seed”) used in diffusion models often influences certain visual characteristics of the generated image, such as composition, pose, or background, regardless of the prompt. For example, if you generate an image of a “dog” using a specific seed, and then use the same seed to generate a “cat,” you may notice that both subjects appear in similar positions and share common visual traits. This demonstrates how the seed itself can impose structural similarities across different generated images.

Over the past year, the seed’s role in image generation has emerged as a central topic in generative image synthesis. This raises a fundamental question: independently from the prompt, which aspects of the generated image does the seed actually control? Answering this question will be the main focus of this thesis.

Expected Outcomes

-

Deep understanding of how generative models, particularly diffusion models work, with a specific focus on the role of noise in the generation process.

-

Experimental analysis of common features across images generated with the same seed, from edges to color distribution, composition, and more.

References

-

Rombach, Robin, et al. “High-resolution image synthesis with latent diffusion models.” Proceedings of the IEEE/CVF conference on computer vision and pattern recognition. 2022.

-

Xu, Katherine, Lingzhi Zhang, and Jianbo Shi. “Good seed makes a good crop: Discovering secret seeds in text-to-image diffusion models.” 2025 IEEE/CVF Winter Conference on Applications of Computer Vision (WACV). IEEE, 2025.

-

Karthik, Shyamgopal, et al. “If at first you don’t succeed, try, try again: Faithful diffusion-based text-to-image generation by selection.” arXiv preprint arXiv:2305.13308 (2023).

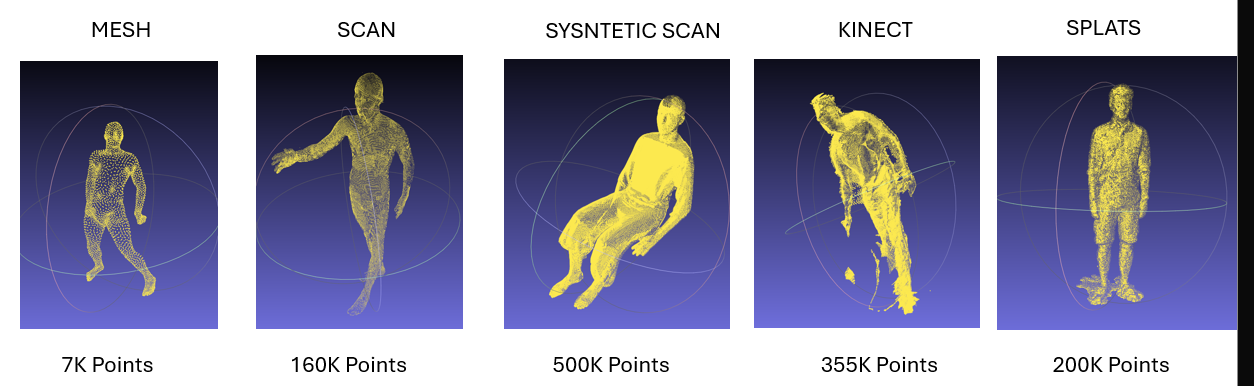

A Multi-Source Human Point Cloud Dataset for Shape Correspondence Benchmarking

This thesis aims to build and curate a benchmark dataset of human point clouds acquired from multiple heterogeneous sources, including high-quality meshes, dense 3D scans, Kinect-style depth sensors, and Gaussian splats. Specifically, all acquisitions will be registered to a common SMPL body model, enabling the generation of ground-truth correspondences across shapes and modalities. From this unified representation, both sparse point-to-point maps and dense registrations will be derived, even across large pose variations and different acquisition conditions. Ultimately, the resulting benchmark will be used to evaluate existing point cloud shape matching algorithms, assessing their robustness under realistic cross-source scenarios where traditional methods are known to struggle.

Data

Multi-source human point clouds from existing repositories (SHREC’19, BEHAVE) and synthetic acquisitions, registered to the SMPL body model. Sources include meshes, 3D scans, synthetic scans, Kinect captures, and Gaussian splats.

Expected Outcomes

- A curated multi-source human point cloud dataset with consistent SMPL-based registration.

- Quantitative evaluation of existing shape matching baselines under cross-source conditions.

References (State of the Art)

- Melzi, S., et al. (2019). SHREC 2019: Matching Humans with Different Connectivity. Eurographics Workshop on 3D Object Retrieval.

- Loper, M., et al. (2015). SMPL: A Skinned Multi-person Linear Model. ACM Trans. Graph., 34(6).

- Bhatnagar, B.L., et al. (2022). BEHAVE: Dataset and Method for Tracking Human Object Interactions. arXiv. https://arxiv.org/abs/2204.06950

Deep Learning for Volumetric Functional Maps

This thesis aims to extend deep learning methods for shape correspondence from surface to volumetric domains. Specifically, the project will build upon the functional map framework, which has recently been generalized to volumetric data through the eigenfunctions of the volumetric Laplace operator. Starting from existing deep functional map architectures designed for surfaces, the student will adapt and redesign the feature extraction and map estimation pipelines to operate on tetrahedral meshes and volumetric representations. Ultimately, the resulting methods will be evaluated on volumetric shape matching benchmarks, comparing their accuracy against both classical surface-based and volumetric baselines.

Data

Tetrahedral mesh datasets from the volumetric functional maps literature, including volumetric versions of established surface benchmarks (e.g., FAUST, SHREC).

Expected Outcomes

- Adaptation of deep functional map architectures to volumetric domains.

- Experimental comparison with surface-based and classical volumetric methods on shape matching tasks.

- Analysis of the benefits of volumetric spectral information for learning-based correspondence.

References (State of the Art)

- Donati, N., et al. (2020). Deep Geometric Functional Maps: Robust Feature Learning for Shape Correspondence. CVPR 2020. https://openaccess.thecvf.com/content_CVPR_2020/papers/Donati_Deep_Geometric_Functional_Maps_Robust_Feature_Learning_for_Shape_Correspondence_CVPR_2020_paper.pdf

- Maggioli, F., et al. (2025). Volumetric Functional Maps. arXiv. https://arxiv.org/abs/2506.13212

Attention Meets Discrete Evolution: Geometry-Aware Feature Extraction on 3D Shapes

This thesis explores the connection between attention mechanisms and Discrete Evolution Processes (DEPs) on 3D shapes. Specifically, the project will study the formal analogies between DEP operators and attention heads, investigating how geometric priors inspired by evolution processes can inform the design of attention-based architectures. From this analysis, the goal is to explore whether such connections can lead to more geometry-aware and parameter-efficient models for shape analysis.

Data

Standard 3D shape benchmarks from the literature, such as FAUST, SCAPE, and SHREC datasets for non-rigid shape matching.

Expected Outcomes

- Formal analysis of the relationship between DEP operators and attention mechanisms on meshes.

- A geometry-aware attention architecture based on DEP parameterization.

- Evaluation of compact iterative attention strategies for shape feature extraction.

References (State of the Art)

- Melzi, S., et al. (2017). Discrete Time Evolution Process Descriptor for Shape Analysis and Matching. ACM. https://www.lix.polytechnique.fr/~maks/papers/dep-final.pdf

- Riva, A., et al. (2024). Localized Gaussians as Self-Attention Weights for Point Clouds Correspondence. arXiv. https://arxiv.org/abs/2409.13291